What is the Bubblegum Sequencer?

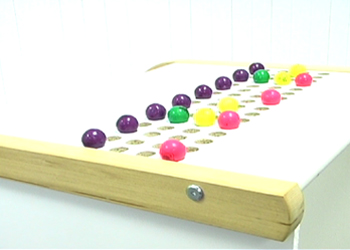

The Bubblegum Sequencer is a physical step sequencer that lets you create drumloops by arranging colored balls on a tangible surface. It generates MIDI events and can be used as an input device to control audio hardware and software. Finally, people can't claim anymore that electronic music isn't handmade.

Here's how it works: A grid of holes, consisting of several rows with 16 holes each is the canvas. On it, you arrange colored gumballs. The 16 columns represent the 16th-notes in a measure. Each color is mapped to a specific sample.

Because the output is generated in the form of MIDI events, the Bubblegum Sequencer can be used to control any kind of audio hardware or software.

If you'd like to know more about the Bubblegum Sequencer, read our course paper.

Demo

Here's a video showing some of the Bubblegum Sequencer's current features:

(Download video as .mov file)

How it's done

The Bubblegum Sequencer senses the position of the balls through a video camera mounted underneath the surface. The captured image is processed by a computer vision routine that computes the average color in each hole. The colors are quantized and mapped to notes. For each note, a MIDI event is generated and sent to the operating system's MIDI bus.

The computer vision software is written in Java and makes use of the ImageJ image processing library.

Updates

In addition to the original features shown in the video, we've implemented a few more:

- Tempo tapping: Tap three or more times on a pressure-sensitive area on the side of the sequencer to set a new tempo for the playback loop.

- Visual feedback: The sequencer now sports a row of running LEDs to indicate the current position. We've also experimented with projecting animations of popping bubbles onto the surface to provide direct feedback which beats are currently played.

- Melody mode: Instead of just playing monophonic beats, we developed a mode in which the vertical position of the balls encodes the pitch of the sample played. To increase the range, two balls can be combined, totalling seven different possible notes on the blues scale.

- Voiceover mode: To make the sample mappings less reliant on computer-based UIs, we wrote a Processing application that lets you record voice samples for each color at playback time. You record by holding down the spacebar and speaking into a microphone.

Who

The Bubblegum Sequencer is a project by Hannes Hesse, Andrew McDiarmid and Rosie Han.

It was conceived and created in the course "Theory and Practice of Tangible User Interfaces" at UC Berkeley's School of Information in the Fall semester 2007.

Press

De-Bug Magazine Nr. 123 (2008) (PDF); De-Bug Magazine Nr. 135 (2009) (PDF)

KQED on the Bubblegum Sequencer going to Maker Faire (about 8 minutes into the show)

Video report on the Bubblegum Sequencer on CurrentTV

Select blogs:

MAKE: Blog

Engadget

Gizmodo

Neatorama

De-Bug Magazine

Spreeblick

LA Times: The Guide

and more...

Software

I released a snapshot of the current software on Google Code. However, it's currently mostly undocumented and has a few platform and hardware dependencies. I can't really offer any qualified support at this point, so you'll probably need to hack around for a while to get it running.

The software is Java-based and makes use of a couple of libraries (mostly ImageJ for Image processing and access to the pixel feed from the web cam). The part that grabs the image stream from the web cam is based on Wayne Rasband's QuickTime Capture example.

Hardware

I currently use a Logitech QuickCam Pro, which you can probably get for about $60. I use a Mac (Intel, OS 10.5), but we've also had the software running on a Win XP machine as well as a Power PC-based Mac before. There are some platform-specific things you need to install, such as a MIDI driver for Mac (http://www.humatic.de/htools/mmj.htm).

Contact us

If you would like to know more about the Bubblegum Sequencer, send us an email.

Post to del.icio.us

Post to del.icio.us

Digg!

Digg!

Post to Reddit

Post to Reddit

![]() Share on Facebook

Share on Facebook